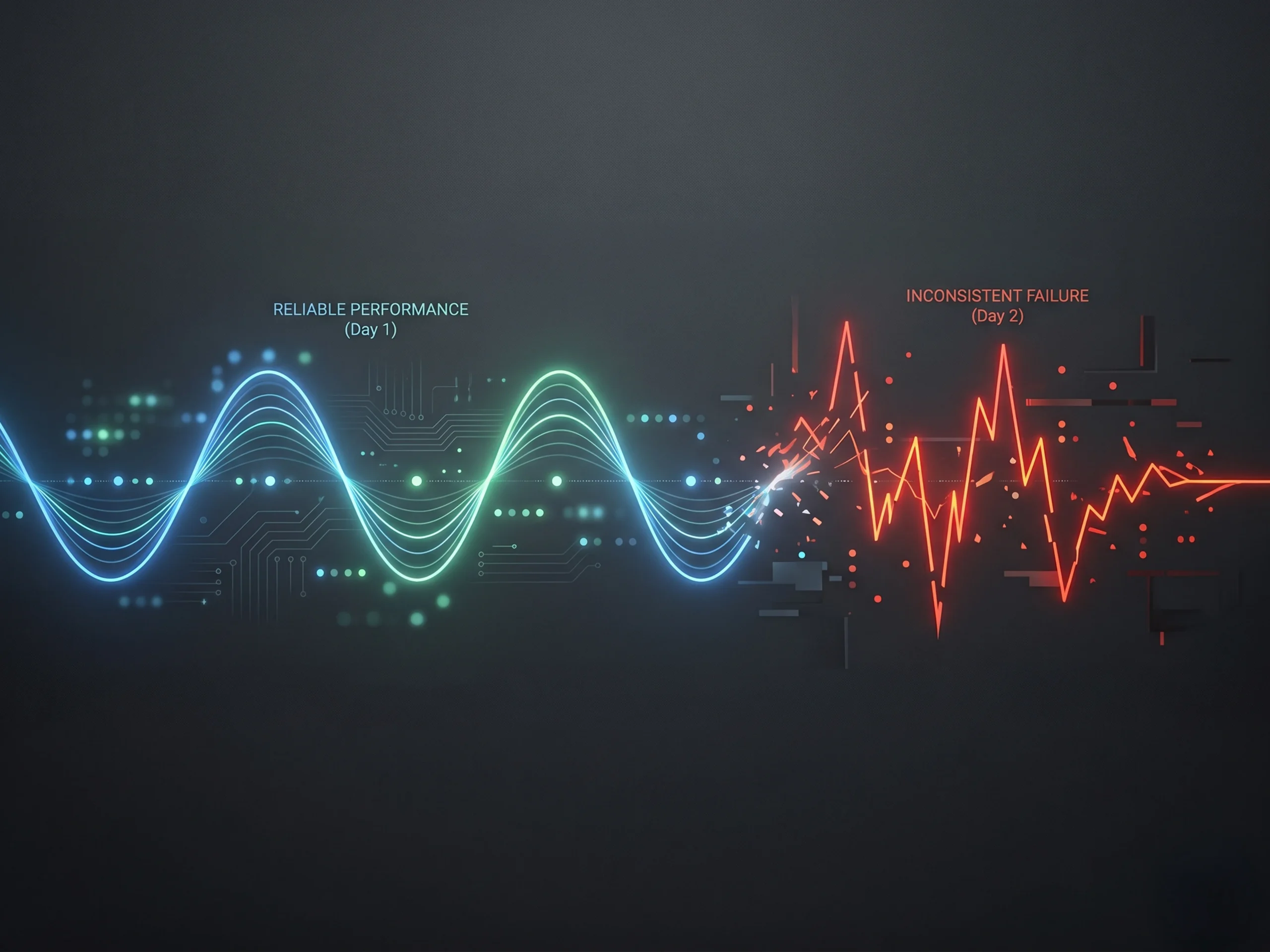

If you’ve spent any time building with AI, you’ve likely experienced this.

One day, the system feels incredible. It answers questions well, generates useful outputs, and starts to feel like something you could actually rely on. The next day, with a slightly different input, it misses the point entirely. It hallucinates. Or it gives you something so generic that it is unusable.

Same model. Same tools. Completely different outcome.

That inconsistency is what frustrates teams the most. It is also what prevents many growth-stage companies from moving AI from experimentation into real production workflows.

At a recent AIConf in Ahmedabad, Ravi Bhatia, Senior Software Engineering Manager at Loopio, framed the issue clearly. The problem is not the model. It is how you are feeding it context.

The Hidden Variable Most Teams Ignore

When teams think about improving AI performance, they usually focus on the obvious levers like better models, better prompts, or more features. But as Ravi Bhatia emphasized in his talk, the real driver of performance is much simpler and much more overlooked.

It is what information is actually being passed into the system, and how it is structured.

As he put it, output quality is directly tied to context. Garbage in, garbage out.

That has deep implications. Every response is shaped not just by the question being asked, but by everything surrounding it. Conversation history, retrieved data, tool outputs, memory, and system instructions all compete for attention inside a limited window. When that system is not designed well, performance becomes unpredictable.

Why Performance Degrades as You Scale

Ravi Bhatia spent time outlining why systems that work early often break as they scale.

Most AI systems perform well at the beginning because they are simple. Limited inputs, narrow use cases, and clean prompts create clarity. But as companies grow their usage, complexity increases. More tools are connected, more data is pulled in, and more interactions are layered into the system.

At that point, teams typically fall into one of two traps.

Some overload the system. Every message, every tool response, and every piece of data gets appended into the context. Costs increase, latency slows, and accuracy drops as the model struggles to focus.

Others provide too little context. The system lacks the information it needs, which leads to hallucinations, irrelevant answers, and wasted time. Bhatia called out both of these failure modes explicitly, noting that they cost teams not just money, but trust.

For growth-stage companies, this is often the moment where confidence in AI starts to erode.

More Data Is Not the Answer

One of the most important insights from Bhatia’s session is that more information does not lead to better results.

In fact, as context grows, models become less effective at reasoning over it. Important details get buried, earlier information is forgotten, and outputs degrade. He described this as context rot, where the system technically has the right information but cannot reliably surface it.

The principle that follows is simple but powerful. Fewer tokens, higher signal.

This is where discipline shows up for growth-stage teams. It means selecting relevant tools instead of exposing every possible capability. It means referencing documents instead of loading entire files. It means deciding what belongs in short-term context versus long-term memory.

Bhatia used a helpful analogy that resonates with technical teams. Context is your RAM. You would not load your entire hard drive into memory, and the same principle applies here.

AI Is Now an Infrastructure Problem

Another key point Bhatia made is that context is not just a quality issue. It is an infrastructure issue.

Every token has a cost, and as context windows grow, systems become more expensive and slower. He highlighted that as context increases, computational complexity scales in ways that directly impact latency and cost.

This is where techniques like prompt caching become critical. If your system structure is consistent, you can reuse large portions of context at a fraction of the cost. If it is not, you lose that efficiency entirely.

For growth-stage startups, this matters more than it might seem. It impacts margins, pricing models, and the ability to scale AI features sustainably.

Where the Best Teams Focus

Ravi Bhatia also made it clear where teams should focus if they want to improve performance quickly.

Retrieval.

Getting the right information at the right time has an outsized impact on system performance. Most teams underestimate how nuanced this is. Keyword search alone is not enough. Semantic understanding is required to match intent, and the best systems combine both approaches.

He also highlighted structural challenges like the “lost in the middle” problem, where models pay more attention to information at the beginning and end of the context window than the middle.

For growth-stage companies, improving retrieval is often the highest ROI investment they can make in AI performance.

Why This Becomes a Leadership Issue

As systems scale, Bhatia emphasized that this stops being just a technical problem and becomes a leadership one.

How disciplined is the team in how they build? Are they measuring performance or relying on intuition? Do they have a clear definition of what “good” looks like?

He cautioned against rushing from demo to production without proper evaluation. Instead, he recommended building “golden sets” of test cases that reflect real-world scenarios and using them to continuously measure performance.

This is what separates teams that experiment from teams that scale.

The Bottom Line

The reason AI feels inconsistent is not because it is unpredictable.

It is because most systems feeding it are.

Ravi Bhatia’s core message was clear. If you want AI to work consistently, you have to be intentional about context. What goes in, what stays out, and how information flows through the system all matter.

For growth-stage companies, this is one of the most important shifts to internalize. The teams that treat context as a first-class problem will build systems that are faster, more accurate, and more cost-effective.

Because in the end, AI is not just about what the model can do.

It is about what you enable it to do.

To stay up-to-date on all upcoming York IE events, follow us on LinkedIn.