I still remember the first time I used the internet.

It was the mid-1990s, sitting in my college dorm room, listening to that familiar AOL dial-up tone while waiting for a single web page to load. Even checking a stock price could take long enough to make you wonder if it was worth it.

Back then, speed was the limiting factor. The internet worked, but just barely.

Then things started to change. Connections got faster, pages loaded instantly and something that felt experimental became something you used every day.

AI seems like it’s at a similar moment right now.

Up until recently, if you wanted to run a powerful AI model, you needed access to a data center. That meant expensive hardware, specialized chips and a constant draw of electricity just to keep it running.

But Google Research recently published a paper that could cut the cost of running every AI model on earth by 80%.

It’s called TurboQuant.

And once you understand what it does, you understand where the next $100 billion in AI infrastructure savings could come from.

The Real Cost of Intelligence

Right now, the AI boom is running into a very real constraint.

Not intelligence, but cost.

Companies like Microsoft (Nasdaq: MSFT), Alphabet (Nasdaq: GOOG), Amazon (Nasdaq: AMZN) and Meta (Nasdaq: META) are spending at a pace that would have been hard to imagine just a few years ago.

This year alone they’re expected to spend around $665 billion on data centers, chips and power just to keep these systems running.

The logic behind all this spending is sound.

If you want better artificial intelligence, you need more compute. And more compute requires more servers, more GPUs and more energy.

But TurboQuant suggests that the next leap in AI will get you the same results with far less infrastructure.

To understand why, it helps to understand how AI models are built.

At their core, these models are just enormous collections of numbers. Those numbers store what the system has learned, and the more detail they contain, the more reliable the model becomes.

That’s why most systems store those numbers using about 16 bits of information.

TurboQuant can cut that down to as little as 2 bits.

Normally, that would break the model. The answers would degrade, outputs would become unstable and the whole system would stop being useful.

What Google figured out is how to shrink the model without losing the information that actually matters.

Instead of compressing everything the same way, it treats different parts of the model differently. The important parts keep more detail. The rest get compressed much more aggressively.

Then it puts everything back together so the system still produces consistent results.

The end result is a model that behaves much like the original, but is dramatically smaller.

In some cases, up to 8X smaller.

Source: Google

And that’s where this stops being a technical story and starts becoming a financial one.

Because building these models is only part of the cost.

The real expense comes from running them.

Every time you ask a model a question, it has to run through specialized hardware and draw power while it does it.

That’s why companies are pouring so much money into data centers. Because this current generation of AI needs enormous infrastructure just to keep up with demand.

TurboQuant changes that equation.

Smaller models need less memory, less hardware and less energy to run.

Which means the same data center can handle more work. Or the same workload can run on much cheaper systems.

That’s where the potential $100 billion in savings comes from.

Not from eliminating data centers…

But from needing less of them to do the same job. And in some cases, not needing them at all.

Because once models get small enough, they don’t have to live in a data center anymore.

They can run on your laptop.

As we’ve talked about before, when the cost of something drops, people don’t use less of it. They use more.

When cloud computing got cheaper, companies didn’t scale back. Instead, they built more software. When bandwidth expanded, people didn’t use the internet less. They simply streamed more videos.

AI will likely follow the same path.

If these models become cheap enough to run locally, companies likely won’t slow down their investments.

They’ll expand them.

They’ll run more models, in more places, across more workflows. And they’ll start putting AI into systems that never could have supported it before.

Right now, most advanced AI systems live inside large, centralized data centers.

Image: Hanwha Data Centers

But as models get smaller and more efficient, that won’t always be the case.

They’ll start running closer to the user. On local machines, embedded systems and edge devices that don’t rely on constant cloud access.

That’s exactly what I saw from companies like Lenovo and Motorola at CES this year, and it’s the direction Apple is moving in with its latest devices too.

TurboQuant won’t replace the data center.

But it will reduce how dependent we are on it.

And over time, that should expand adoption even further.

Here’s My Take

For the past two years, the playbook has been simple. Spend more on compute, get better results.

What Google is showing with TurboQuant is that efficiency can move the needle too.

That creates a different kind of advantage.

The companies building AI infrastructure today might not end up spending less going forward. But they could start getting a lot more out of every dollar they put in.

If that happens, AI adoption should move faster, not slower.

Because once the cost of intelligence drops far enough…

People will find more ways to use it.

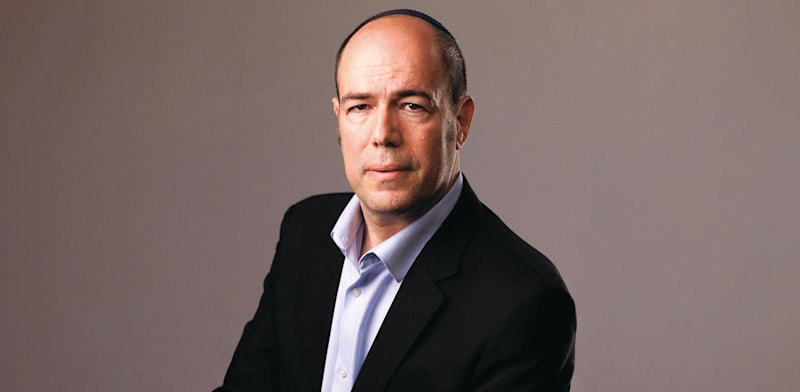

Regards,

Ian KingChief Strategist, Banyan Hill Publishing

Ian KingChief Strategist, Banyan Hill Publishing

Editor’s Note: We’d love to hear from you!

If you want to share your thoughts or suggestions about the Daily Disruptor, or if there are any specific topics you’d like us to cover, just send an email to [email protected].

Don’t worry, we won’t reveal your full name in the event we publish a response. So feel free to comment away!